Prisoners and Fairness

Contents

Fairness is rarely pronounced, yet an important concept in computing. Its applications appear in different domains. Even when its unspoken about in documentation and descriptions of systems, I sometimes notice, "hey, for this to work, fairness is required!" So, what's it all about?

Prisoners

The first thing I all of a sudden encountered fairness in is the prisoners riddle. Here's how it's usually formulated:

100 prisoners are sentenced to death. However, they're given a chance to survive. In one of the cells, there's a light bulb, which is initially off, and a switch connected to it, which can turn the bulb on and off. In an arbitrary order, which is not known to any of them, jailer lets prisoners into the cell; they can observe if the bulb is on or off, and, optionally, turn the switch.

If a prisoner, after visiting the cell, says that every prisoner visited the cell at least once, and he's right, then everybody is set free. If he's wrong, then every prisoner is executed. Of course, every prisoner can pass and say nothing; then the iterations continue.

The prisoner's can't talk to each other after this trial begins, they're kept in solitary. However, before this "trial" is started, they're given a chance to make up a plan, which they would follow. Can the prisoners make such an arrangement, that makes them sure that they are set free, no matter in which order they are allowed into the room?

There are just a couple of riddles of this sort that I don't consider stupid, and this is one of them. Take time to solve it, and you'll be satisfied with the process and the result. You can keep reading, though, there won't be spoilers.

So, does the problem look sound? Is its definition complete? It sure is not. Indeed, it's quite possible that one prisoner never enters the room at all! Since they enter then cell in an arbitrary, not a random order, it's possible. That means that it's possible that not everyone will ever enter the cell, and in no situation the statement "everyone was here" will be true. So we should conclude that no matter how prisoners decide to act, they may have never get freedom.

This makes the problem not interesting. What should we add to make it have only non-trivial answers? You already got the idea: we should enforce fairness.

Definition of fairness

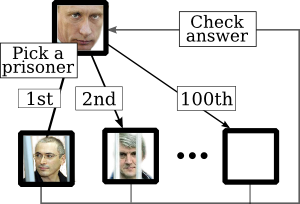

Picture A

A simple state machine for the jailer. The jailer nondeterministically picks one of the prisoners, lets him into the bulb room, and checks their answer, if any.

So let's formalize the work of the jailer a bit. All prisoners reside in their separate cells, and sometimes the jailer decides to open one, and to invite its inhabitant into the bulb room. If the prisoner doesn't decide to make a final guess, then the jailer picks a cell to open again. This can be modeled as simple state machine. See picture A.

The jailer makes an arbitrary choices whom to invite next. But it wouldn't be fair if a certain prisoner is never let into the bulb room, as we've shown before? It wouldn't. And it wouldn't be more fair, if a prisoner is not let to the room after a certain moment. It's just the same as if it's never let there, because up to this moment the prisoners' plan might have not moved forward. (My argumentation is kinda weak, but I try to avoid spoilers.)

So the work of the jailer would be fair if it never happens that a prisoner is not let to the bulb cell after some moment of time. If the jailer follows this, then its work is fair. This is closely tied with a concept of fairness in computing.

In one of my previous posts, the one about the search for a declarative language, I came up with my own, generic definition of fairness. I'll quote it, slightly reworded, here:

Fairness is a property of a nondeterministic system, which repeatedly and infinitely faces a multiple choice, to not allow such behavior that a certain item is never chosen past any moment of time.

For this prisoners riddle to be interesting and well-defined, prisoners should be let into the bulb room in a fair manner.

Applications of fairness

Task scheduling

A scheduler implemented in your operating system is one of the most important parts that affect its performance. If your system supports multitasking, it should spare limited CPU time between tasks to reach some goals you set. For a desktop system you might tune your process scheduler to react fast to mouse clicks you make, while running some tasks in background (can't help quoting Linus' sarcastic letter on this matter). For a system which doesn't need small response time, but should effectively schedule long-term tasks that communicate with each other, you might need another scheduling algorithm (see my Stack Overflow question, during which I encountered such an inefficiency on a desktop-tuned system).

Usually schedulers work like this: timeline is divided into chunks, and before the next chunk begins, scheduler chooses, to which process it should assign the next chunk of CPU time. Sounds much like the work of that jailer, doesn't it?..

In scheduling, fairness has more strict definitions, and more complex metrics. They calculate and impose restrictions on fractions of CPU time allocated to tasks.

Scheduling algorithms should not adhere to communism: some tasks have more priority than others. But schedulers should be fair when choosing the next process to take CPU's core. Indeed, if they wouldn't be fair, then it could be theoretically possible that our programs just never have chance to start if there's something else happening! However, if scheduler is fair, we at least know that program will terminate (thought we still don't know when exactly).

Distributed computing

The first place I heard about fairness as of a separate concept, were studies of distributed algorithms in my university.

When several machines run in parallel, and communicate with each other, it's usually quite a complex system to study and implement. Just reckon all these race condition and concurrency bugs you faced!.. To study distributed systems we should model it in some way.

Actually, what I call "fairness" is known as "strong fairness". There also is a concept of "weak fairness". Its "weakness" is that the systems eventually should make a certain choice only if it has such option always from now on. If such an opportunity regularly disappears, the system can opt it out and be nevertheless weakly fair.

The most obvious way to do that is to pretend that

- at each moment of time, only one machine works, while all others are paused

- there is an independent "oracle", which decides what machine will run next, the oracle being not associated with any machine

- the oracle can stop machine only after it has executed an atomic operation completely

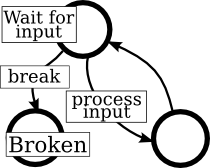

Picture B

Example of model of a machine in a network, when fairness wouldn't be the best option to model machine's behavior. Fairness forces the machine to eventually end up in a "broken" state.

Sometimes it would be nice to assume that the "oracle" makes machine run in a fair manner. However, this assumption is not frequently taken. Consider that we need to model what happens when a machine in a network breaks. We can model it as having a nondetermenistic choice to either break or to keep working (see picture B). Enforcing fairness would make us think that any machine will eventually break, and this doesn't conform to what we want to model.

Nevertheless that's how I first learned about fairness. Theory of distributed computing contains a lot of other interesting stuff, thought it's nontrivial. Our lectures used slides like these, it might be interesting to read.

State machines

Fairness can also be defined in terms of state machines. You can check wikipedia article with a paragraph about that. Basically, there's nothing new compared to what I described in previous paragraphs; I even used state machines to demonstrate what I'm talking about.

Implementation of fairness

Fair choice is usually implemented as random choice. Probability distribution doesn't need to be equal (for example, in a dumb implementation of a scheduler, it may be associated with task priorities).

However, this is not completely precise. Fairness requires every choice to be made. Random choice leaves theoretical possibility for an option to be completely ignored. And if this is allowed to happen, the choice is not fair anymore. What are the odds of such behavior? Assume we have two choices, each made with probability equal to ½, and the first choice is never made. This happens with probability ½⋅½⋅½⋅½⋅..., which is equal to exactly zero.

Therefore, the "random" implementation only implements fair choice with 100% probability, but not always. With zero probability the choice would be unfair. Isn't it too much? Only requirements of your implementation would tell. After all, we all were born and grew up the way we are with exactly zero probability...

While fairness is implemented as randomness, it shouldn't be forced in specifications of a system. The Whenever language I described in one of my posts doesn't really benefit from its choice of a line to execute being random. However, fair choice would be more suitable, as some executions, with zero probability, can make a correct Whenever program useless.

***

Fairness is an important concept in our life. You should be fair to prisoners, you should be fair when you have powers and use it to allocate resources to others. In computing, it's all the same.

In many domains of computing definitions of fairness vary. In this article I described only a couple: "strong" and "weak" fairness as understood in theory of distributed computing. Instead of being "totally" fair or unfair, fairness may be a metric, and system behavior is defined in such a way, so that this metric is not less than a certain value.

The exact definition of fairness is complex and hard to coin in a general way: it becomes too vague. I made such an effort in the middle of this article, but if you have a better idea, it's welcome in comments. Nevertheless, I hope this post gave you at least a notion of what fairness is. And I also hope, that when you notice it in in a system you design, use or encounter, you'll use the correct and concise word to describe its behavior.